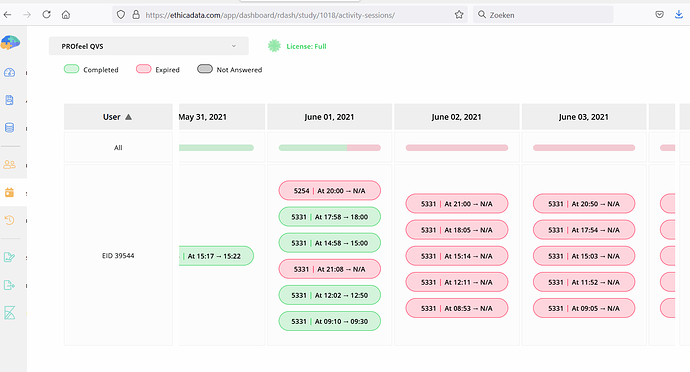

We have subjects that receive notifications and who complete their surveys, but their results are not visible in the system anymore. Study 1018, e.g., subject 39544 : no data available from june 2 till today; but in participant audit logs you can see the activities for this subject that should have produced data.

Hi @janhoutveen

The conditions defined in your study makes ~1000 survey sessions, which one third of them are active and two third are disabled and will be activated depending on participant’s response. This makes survey response submission very slow, and causes the response submissions from the app to fail. The maximum our server can respond to right now per participant is ~400 sessions (40% of your current setting).

That’s why for this participant, the participant’s app kept uploading survey responses, and the server failed to save them. Now in this case, the app noticed the submission failed, so it saved the survey for the next time, and next time it uploaded both the new response, and the previous one, doubling the load. That’s why you saw no response.

To resolve this case, I set the system to manually process the response from this participant. You need to ask the participant to uninstall and reinstall the app to continue. don’t worry, no data is lost. All is now available on the dashboard and you can see them.

To prevent this problem, you need to simplify some of the criteria used in the Triggering Logics of your surveys.

Hope it clarifies,

Mohammad

Hi Mohammad,

Thank you for your answer and to manually fix it for 39544. We will ask 39544 to reinstall the app.

Can you please solve this same issue in a similar way for 39601?

Can you also help me to fix this problem in the criteria of study 1018; I dont know what to do to solve it, many subjects are currently included in this study and I cannot change the study conditions.

But maybe something else is going on. I do not completely understand it. In study 1018 there are 3 surveys that generate intensive longitudinal survey sessions. However, based on the answer on baseline survey 5328 Q15, two of them are always disabled for a subject (criteria: Q5328_15 == 1, 2 or 3). Thus, the other two surveys cannot generate survey sessions. The 1 out of 3 active survey will generate 5 sessions a day in two sets of 30 days, that is 5 x 30 x 2=300 survey sessions. Notify that a fourth survey generates a survey session once a week for a year (52 survey sessions). Added up this makes about 350 survey sessions; less than 400. This explains why two sets of intensive survey sessions did not cause a problem for subjects 35278, 34270, 35307 and 34842.

regards

Jan

I applied the same fix to 39601 as well.

I understand as the study is in progress, it can be a bit tricky. The solution would be to use less triggering logics per survey. Ethica creates 50 sessions per triggering logic, which in your case, for 3 surveys, and 9 TL per survey, adds to ~1400 sessions. We are working to improve the performance of this part. Alternatively, you can let us know (email support@ethicadata.com) to apply the same manual fix until then.

Mohammad

Hi Mohammad,

Thank you for applying the manual fixes. We, however, still do not completely understand it.

- In this study there are 10 triggering_logics (TL) in each survey; you mentioned that Ethica creates 50 sessions per TL; that is enough for 50 days once a day, but that may not be enough for longer assessment periods. Fortunately, a TL continues for only 30 days in this study.

- Is it really true that all TL’s of a survey that is inactive based on a false survey criteria for a specific subject still counts for the maximum number of sessions of 400? When this is true, the survey criteria method to personalize a survey by selecting one out of several based on the answers in a baseline survey (for this study and for many other studies for several universities in the Netherlands) is currently unusable.

- We still do not understand why we already have had several participants that completed two time periods of ESM and who did not experience this problem.

- This issue has serious consequences for the current study, that is already almost halfway. When do you expect this issue to be fixed, and what does applying the same manual fix until then mean for our work flow? Do we have to ask all our patients to uninstall and reinstall Ethica halfway?

regards

Jan Houtveen

Anouk Vroegindeweij

Hi Jan,

I asked the team to increase the timeout for your study’s request temporarily. So this issue won’t happen any further.

Also, if the study is not intended to continue indefinitely (as I believe is the case here), please try to set the proper end-time for the registrations. That helps a lot.

Thanks,

Mohammad

Hi Mohammad,

Thank you for increasing the time out.

I have changed the participation duration to 365 days. I do not know yet hoe long inclusion and data collection continues, so I cannot set the participantion period to an end date.

regards

Jan

Hi Jan,

The problem seems to be related to the participant responding to the surveys offline for a while, and now the app tries to upload ~20 survey responses at once. This, together with the criteria evaluation I explained above, causes the problem to happen. We are getting errors from Participant ID 39601. She should reinstall the app and then continue again (no data will be lost). And should also try to upload responses regularly. The Data Sync option in the app clearly shows what is being uploaded.

Thanks,

Mohammad